In this post, we’ll cover how robots experience the world, first understanding how us humans experience the world, and then drawing parallels to robots with examples of how a robot experiences the world. So, how do robots experience the world?

To understand how robots experience the world, we first consider how humans experience the world. There are give common senses we use to understand the world around us:

Sight from our eyes lets us see the world around us, objects close and far away. Touch from our hands lets us feel the world around us, from how soft something is to how cold something is. Hearing with our ears lets us hear soundwaves around use and is useful in knowing if someone is behind us without seeing them. With taste from our tongue and smell from our nose we know if food is good or bad. The same way we have these body parts that let us experience the world, robots have specific body parts called sensors that let them sense and experience a specific part of the world:

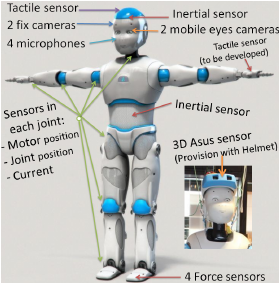

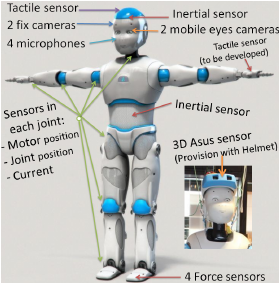

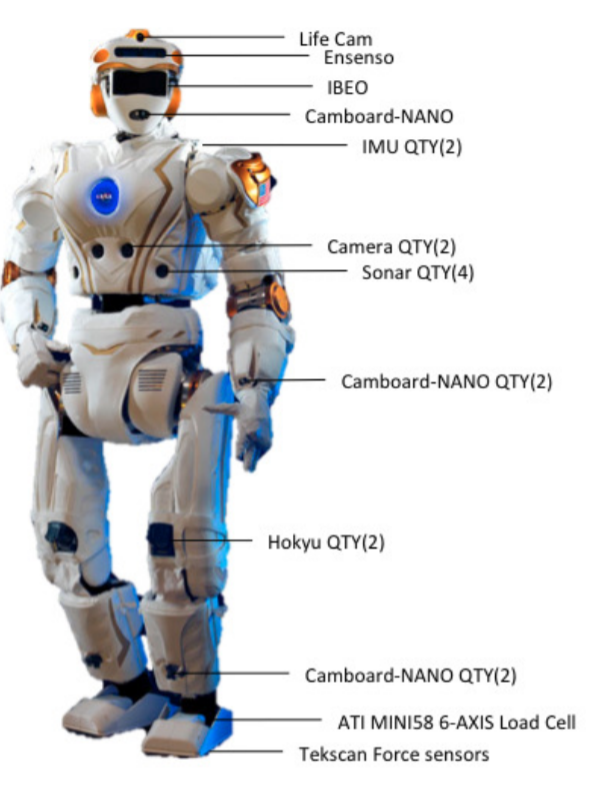

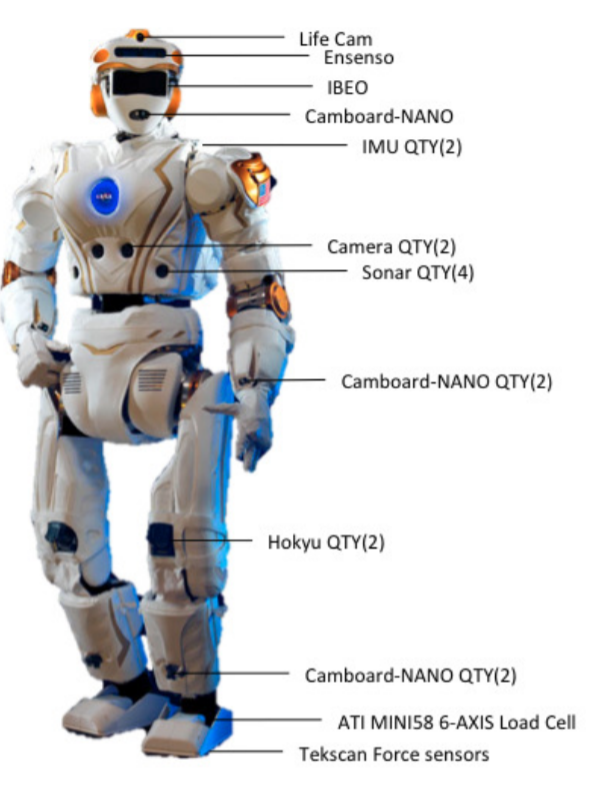

For example for sight to see the world, a camera with computer vision can be used. For hearing, a microphone can translate sound into digital signals the robot can understand, similar to how Siri or Alexa can understand our voices. These sensors let the robot perceive the world around them, and understanding what is around a robot is known as perception. Lets take a look at some robots and understand just how many sensors they use to understand the world around them:

- Gyro Sensor : Used for stabilization the like liquid in our ears that we use for balance

- DMS: infrared distance sensor to avoid running into things

- IR Sensor: reflects infrared light to see if something is nearby and tell what it is from its shape

- 3D Asus Sensor: Depth sensor to determine how far away it is

- Cameras: For object detection to “see” things

- Microphones: To hear things

- Tactile Sensor: To “feel” things

- Inertial sensor: To track axis movement and tilt/angle

- Force sensors: To feel the ground and help keep balance

- IMU: Inertial measurement unit, to help stay stabalized and straight up

- Camera: For object detection, image recognition

- Sonar: For environmental sensing of surrounding objects

- Hokyu laser scanner: Safety to avoid hitting things by measuring the distance the nearest object reflects the laser light

- Tekscan Force sensors: To maintain balance, determine weight on each foot

Another real life explanation of how sensors are used to understand the environment comes from Brain Corp floor cleaning “scrubbers”:

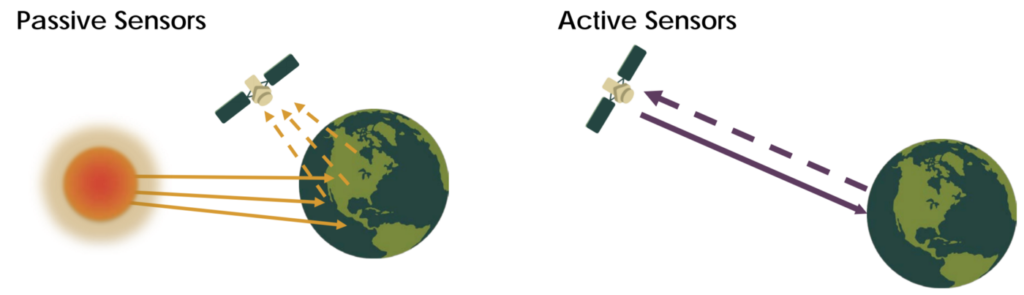

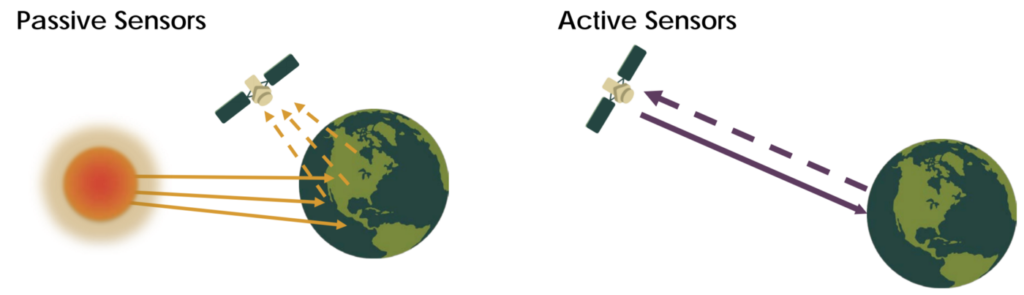

The sensors themselves are also different in how they measure and gather information from the environment. All sensors fall into one of two categories:

Passive: Ambient energy to perform measurement, such as a camera that absorbs light to generate a digital image.

Active: Transmits energy into environment to allow measurement, such as a LiDar or ultrasonic sensor that emits energy waves into the environment and then looks for reflected energy waves.

For example, a satellite can be a passive sensor if it takes picture with a camera, as the camera is simply absorbing light from the sun reflected on the earth. An active sensor would be if the satellite itself emitted energy into the environment, such as by sending out an electromagnetic pulse to measure the distance from the satellite to the earth and receiving the reflected energy that the satellite itself sent out.

If you want to continue your robotic learning journey, consider reading up next on how robots see. If you are interested in more details related to robot sensing, you can find more details below, or read our related post about why robots don’t like shiny black floors.

Advanced Sensing Concepts

As we have seen above, robots use a variety of sensors to experience the world around them. Sensors actually can also help keep track of the robot itself, kind of like body temperature for a human can be used to tell if we are sick (we have a fever). The difference between sensing internally and externally is known as:

Proprioception: Estimation of internal state of the robot, configuration, temperature, current, speed of axis

Exteroception: Estimation of the state of the environment with respect to robot

The type of data that a given sensor can record is also different, such as:

- Scalar: A numeric metric that can range across an interval such as temperature

- Positional: Coordinates on a plane such as a point (x,y)

- Derivative: Sensed data that changes over time such as motion

Another import concept is sensor performance. Not all sensors are the same, with sensors varying drastically in price based on how accurate and reliable they are. Sensors have a range of measurements metrics, such as resolution, linearity, bandwidth/frequency, sensitivity, and error margin.

Sensor Noise: Systematic (can be calibrated), random energy that affects readings, such as noise to a microphone.

Sensor Resolution: Degree of accuracy, i.e a distance sensor measuring on the order of meters vs centimeters.

Sensor Precision: Reproducibility of measurements (range / variance) if you were sensing/sampling the same environment.

There are different classes of sensors based on the type of data they record:

- Orientation/Heading: Compass, Gyro, Accelerometer

- Ranging: Ultra-sonic, Sonar, Infrared

- Time of Flight

- Phase Difference (offset of reflect wave)

- Triangulation

You may wonder how sensors are able to take information about the real world (analog) and convert it to digital information (binary) that a computer/robot can understand. This is done though analog to digital converters (ADCs). A good summary on how these work can be found here, but essentially the real world data is sampled at a fixed rate, so there is a loss of precision over time.

Sensors often also require calibration, which is adjusting settings on the sensor that alter the information they return to account for error within the sensor or system. Consider a weight scale that reads 2 pounds when nothing is on it. We could fix it by “calibrating” the displayed weight by subtracting two pounds, then it will correctly show 0 pounds when nothing is on it.

One of the most important “senses” for a robot is sight, which allows it to move around in an environment or manipulate objects. We can learn about how a robot sees in our next post.